© 2019 Chris McVeigh

By Maya Rose and Teresa Ober, Ph.D*

* authors contributed equally to this blog post

Introduction

The COVID-19 pandemic has brought about challenges to graduate and early career researchers, wreaking havoc on their research plans and causing yet unknown downstream consequences on career opportunities. Though we don’t yet know the long-term impact of the pandemic on our professional journey, we offer some personal reflections on the challenges we faced in adapting our research plans to the current circumstances. We situate these reflections in the context of general questions pertaining to our research during the pandemic. Given the constraints of our research, we faced different types of challenges along the way. In addition to these reflections, we provide a list of general tips and resources for considering how to adapt your research. Our hope is that these insights may be useful to other graduate and early career researchers experiencing similar problems in their pursuit of research.

What does your research focus on?

Teresa: For the past year, I have been a postdoctoral research associate in the Learning Analytics and Measurement in Behavioral Sciences (LAMBS) Lab at the University of Notre Dame under the direction of Dr. Ying Cheng. Before that, I was a doctoral student in Educational Psychology at the Graduate Center of the City University of New York (CUNY). As a researcher now in the LAMBS Lab, my most recent work has examined measurement issues in interactive learning and assessment platforms, such as the AP-CAT platform. Through this line of work, my collaborators and I are examining the associations between student engagement and learning, focusing particularly on the viability of using process data variables (derived from digital log files from the use of an interactive online learning and assessment platforms) as indicators of engagement. This work uses the context of introductory high school and college statistics education to examine these factors. Though this comprises the crux of my current work, I have also conducted research to study the use of game-based digital technologies to promote engagement and learning, understanding the associations between executive functions and reading, as well as the Scholarship of Teaching and Learning. I am currently working on several projects that aim to investigate and intervene on issues affecting individuals from underrepresented groups in STEM. More information about some of my latest work can be found on my website here: tmober.github.io.

Maya: I am currently a PhD candidate in the Educational Psychology program at the Graduate Center, CUNY and work as a graduate research assistant in the Language Learning Laboratory (PI: Dr. Patricia Brooks) and the Developmental Neurolinguistics Laboratory (PI: Dr. Valerie Shafer). My dissertation, titled, “Does Speaking Improve Comprehension of Turkish as a Foreign Language? A Computer-Assisted Language Learning and Event-Related Potential (ERP) Study,” examines how test variations in a computer assisted language learning (CALL) platform promote comprehension of Turkish grammar and neurolinguistic processing (as evidenced by ERPs associated with processing of grammatical markers). I recently wrote up the pilot results of this study for a poster at the Association for Psychological Science Conference (Rose et al., 2020). The dissertation involves two sessions. During session 1, participants come into the lab for the behavioral portion which includes the CALL platform and some language learning aptitude tests. I created the CALL platform using PsychoPy which is a free open-source software package for experimental psychological research written in Python. The aptitude measures are presented as paper-tasks or via E-prime. During session 2, participants come back to the lab for the ERP session where they complete a grammatical violation paradigm while wearing a 16 electrode EEG cap. Both sessions are completed synchronously in person.

A few of my other research projects involve working on large secondary datasets. For example, Dr. Jay Verkuilen and I recently presented some of our work involving graphical analysis approaches to reaction time data.

How has COVID affected your research plans?

Teresa: The COVID-19 pandemic has certainly turned everyday research practices on its head. In my experience working on a project that involved data collection in the Spring 2020 semester, I started to more fully realize the benefits of collecting data online given the circumstances. The study involved the high school students’ use of an online computerized assessment and data collection platform. We were fortunate in the sense that the participants enrolled could still complete the study from home. Nevertheless, there are reasons to be concerned about the quality of online and remote data collection, as so many uncontrollable factors could affect participants’ performance (including simply the stress from working at home through the pandemic). In an effort to account for the impact of the students’ instructional transition from face-to-face to online, we administered a self-report instrument to try to capture variability due to perceived instructional discontinuity. While not a fool-proof plan, as self-report instruments, particularly those which are new and underdevelopment, could compromise face validity, it did provide a snapshot of how students in the study were directly impacted by the transition from in-person teaching to online teaching as a result of the pandemic. Now that the data has been collected, there are other factors to consider. Since the study also involved estimation of item parameters based on item-response theory, there is ongoing discussion about whether or not to include responses after mid-March in the estimation of item parameters in light of the circumstances, and the potential that performance may be less reflective of students’ actual ability than in previous cycles of data collection.

For another project, which aimed to examine the effects of different test formats on cognitive and non-cognitive thought to influence student learning and performance, plans were initially in place to collect data in-person. Due to the circumstances we were able to modify the study protocol and administration to an online format with what we hope is of little compromise to the actual research questions and study design. Recruitment for this study is now underway.

In certain circumstances, it is not feasible to administer a new scale to capture variability likely due the unforeseeable event (in this case, the pandemic) or modify the data collection procedure to meet safe social distancing practices. In such cases, there always remains the option to put on hold a project that no longer seems realistic. As unfortunate as this seems, one’s efforts could be better concentrated on other projects directly related to their line of work (see recommendations below).

Maya: In March 2020, the COVID-19 pandemic swept through New York City. At that time, I was not sure how it would impact my research plans so I continued designing my experiment. On April 27th, I defended my dissertation proposal largely knowing that collection of EEG data would not be possible during the pandemic. Afterall, EEG involves close contact between the researcher and participant. As long as labs are closed, the EEG portion of my dissertation is not possible. On the other hand, with some tweaking, the behavioral session is still possible.

Luckily, the other research projects I am involved in have not been impacted by COVID, largely because they involve secondary data/open big datasets. If you are starting to think about research or dissertation topics, I highly recommend exploring big open datasets. For example, the Inter-university Consortium for Political and Social Research (ICPSR) has many datasets relevant to social and behavioral sciences.

How have you adapted to the challenges brought about by COVID?

Teresa: For all of my projects that are collecting data, recruitment of participants, administration of the study, and provision of participant compensation is now fully remotely and online. Recruitment of participants has largely occurred through ads in weekly newsletters to the general student body, which has been surprisingly effective. Since data quality monitoring is obviously a concern given the remote study administration, we will likely also consider how carelessness might manifest in the data and to apply theory-based approaches to data cleaning.

In addition to adapting ongoing research activities, I also felt that my skills could be used towards forming a better understanding of teaching and learning, specifically within the context of the crisis. Some of my current research examines how teachers and students have themselves adapted to the circumstances surrounding the COVID-19 pandemic. For one ongoing long-term LAMBS Lab project that has involved partnering with high school teachers and their students throughout an academic year, we wanted to know a bit more about the experience of teachers as they transitioned the course from in-person to a fully remote and online format in the midst of the pandemic. I conducted interviews with teachers following a semi-structured interview protocol and conducted a qualitative-quantitative text analysis to understand common issues, as well as the prevalence of certain issues mentioned in their responses. From this, I learned how tools we use all the time, such as Zoom, can be great for collecting qualitative data from interviews, particularly when a sample size is relatively small.

Other work I’ve recently conducted has focused on students. In collaboration with colleagues at CUNY, I recently examined attrition as an outcome of a study in which we wanted to see whether students enrolled in a college course were more or less likely to submit online homework assignments at specific time points across the Spring 2020 semester. The study examined the challenges New York City students reported facing during the semester, as they tried to stay on top of coursework while living within an initial epicenter of the COVID-19 outbreak.

Maya: At the start of the summer of 2020, I began to think about ways in which I could deliver the behavioral portion (session 1) of my experiment remotely and online. I needed to find a way to digitize the paper tasks I used and remotely deliver the tasks that I had in E-Prime and PsychoPy. Although my research questions remained the same, I was required to submit an amendment to the IRB describing the online version of my experiment. As part of this, I created an online consent form that aligned with CUNY’s oral/web-based consent form template. The amendment also outlined each virtual element of my experiment. Below I listed these elements and their respective virtual platforms. I also included the modalities that I use in the in-person version of my experiment in parentheses:

- Consent Form and Background Questionnaire (paper-task) = Qualtrics

- Language Aptitude Measures (paper-task and E-prime) = Qualtrics and E-Prime Go

- Computer Assisted Language Learning Platform (PsychoPy) = Pavlovia (described further below).

As you can see from this list, elements of my experiment are delivered in disparate platforms. Therefore, it is important to not only test each one individually, but also test the flow of one element to the next. Below I describe my experience thus far with each of these platforms:

- Qualtrics: I was able to design my consent form and background questionnaire in Qualtrics but I had to think about how participants would get to Qualtrics from SONA (the online system that we use to recruit participants from the undergraduate subject pool). While I have not started data collection yet, I know that students can log onto SONA from home (as they did before the pandemic). I also created a timed fluid intelligence task in Qualtrics (which was formerly in paper form). Qualtrics has a timing feature that allowed me to do this.

- Pavlovia: Pavlovia is a site created by PsychoPy that makes it possible to run studies online. They also have a repository of public experiments that you can explore! By using Pavlovia, you can keep track of your workflow and manage version control by using the GitLab Repository. Jordan Gallant recently posted a helpful tutorial on getting started with Pavlovia. As I noted above, I had a working version of my CALL platform in PsychoPy. With that said, before putting this experiment online using Pavlovia, I had to re-create this experiment because the version I had working in my lab was not working on my personal machine. This may have been an issue with a microphone component that I used in PsychoPy or the fact that my personal machine uses a different OS than my lab machine. These may seem like tiny details, but they are important things to consider! Keep in mind that an integrated microphone component which works on PsychoPy does not work with Pavlovia as of now. Therefore, if your experiment involves speech production, you will have to think about external methods of recording. Now that I have created a working version of my experiment in Psychopy on my local machine, I am working on designing a suitable version for Pavlovia. If you have any questions about this process, please let me know. The PsychoPy forum is very helpful too!

- E-Prime Go: This will allow me to deliver my E-Prime tasks online to my participants. With that said, you need E-Prime 3 to use E-Prime Go. The cost of updating a single-user license is about $800. I have not used E-Prime Go yet but I have heard that it works nicely besides some caveats. For example, your participants must be using a Windows machine to use E-Prime Go.

What suggestions do you have for other graduate students and early career researchers who are experiencing similar research-related problems in light of the COVID-19 pandemic?

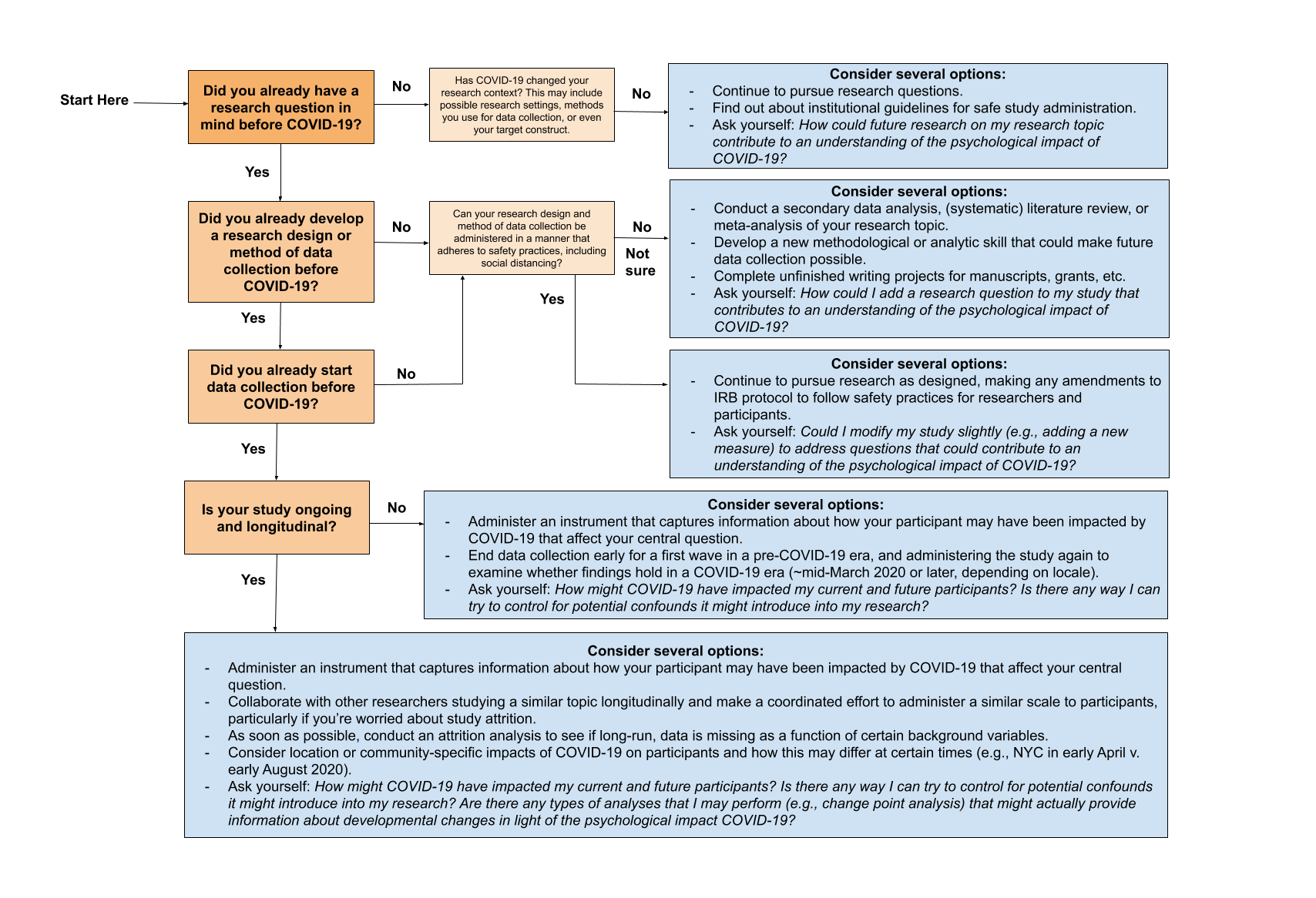

Figure 1. Options for adapting current and future research (Ober, 2020)

Finish a Writing Project, Learn a New Skill

While many labs on campuses remain partly shuttered to in-person data collection, this may be an opportune time to finish an ongoing writing project or even dedicate time to learning a new skill. A number of professional societies and organizations such as the Division 15: Educational Psychology of the American Psychological Association, the National Council for Measurement in Education, and the Inter-university Consortium for Political and Social Research have a number of free and online webinars on innovation methods and emerging issues in educational research. Some virtual conferences may also hold online video repositories for attendees to continue viewing presentations.

Consider Secondary Data, Run an Existing Experimental Study Online

As mentioned above, consider exploring secondary data! You won’t need to collect the data and can move right to the analysis. In exploring big open datasets, you will also become familiar with the data organization process. Although these datasets often come with data dictionaries and codebooks, you will have to devote some time to familiarizing yourself with the data. Often the data will not be organized or presented in the way you want it to be. Therefore, use big open datasets if you want experience with both data cleaning and analysis. With that said, there are a lot of opportunities to collect primary data online with existing experimental designs. This is a great option if you don’t have time to design an online experiment from scratch. Pavlovia allows you to explore publicly available experiments.

Modify Current Research Plans

Some research can be modified to meet the needs of the current circumstances. Before carrying out any changes to your research protocol, it is important to check to see whether an IRB Amendment is warranted and to receive the necessary approvals. Since this process can take some time, it is good to plan well ahead. Especially since many staff at administrative offices at college and university campuses are likely to be working from home, there may be further delays compared to normal turnaround time. Other logistics of the study administration process might also take additional time, such as announcing the study and recruiting potential participants, or finding an approved method for paying participants.

For researchers with access to college or university-provided participant recruitment pools (e.g., SONA), tapping into such pools may be effective for participant recruitment. Keep in mind that recruitment solely via such platforms may not be as ideal as it would under regular circumstances. For example, courses may not emphasize research participation for extra credit to the same extent they would under pre-COVID-19 circumstances. Other platforms that might help with the online recruitment include, but are not limited to, the following:

- MTurk: Crowdsourcing marketplace that allows you to share “hits” (i.e., tasks) such as completing a survey through which you can recruit and compensate participants.

- Prolific: Platform for recruiting participants, even based on specific recruitment criteria.

Setting up an Online Study

There are a number of online data collection tools that researchers can consider when adapting their research. Such tools can be used for online study scripting and deployment of experimental studies. They can enable researchers to create a range of online data collection tools from simple questionnaires to reaction-time tasks to randomized controlled trials and complex multi-day training studies. There are many free and open-source tools including, but are not limited, to the following:

- PsychoPy (Python-based; Option to use GUI or code editor)

- JsPsych (JavaScript)

- FindingFive (JavaScript)

- PennController for IBEX (JavaScript)

- Electron (JavaScript)

- Gorilla (Option to use GUI or code editor)

- Pavlovia (Online study deployment)

- Qualtrics (Online form builder) – If you are a student at the Graduate Center, you have access to Qualtrics. You can access Qualtrics by signing in using your GC network login here.

Before starting your online study, think about the disparate platforms you are using to collect your data. If you are attempting to streamline the flow of your study between different platforms and are using SONA, Pavlovia, and Qualtrics, here are some helpful links:

Some Final Thoughts

Without a doubt, it is unfortunate that graduate students and early career researchers are in a position that requires them to adapt so extremely to current circumstances. Such a crisis also warrants reflection, and thus researchers may want to ask themselves: How could future research on my research topic contribute to an understanding of the psychological impact of the COVID-19 pandemic? We know very little about the long-term impact of the disease, let alone the long-term social and societal impact. Social and behavioral scientists can contribute by addressing important gaps in our understanding of the prevention against, impact of, and resilience to COVID-19 and related factors (Van Bavel et al., 2020). While not everyone’s research plans fit with this agenda, it is something for current and future generations of educational and psychological researchers to consider as we all take stock of the crisis and still forge a path ahead.

References

Van Bavel, J.J., Baicker, K., Boggio, P.S. et al. (2020). Using social and behavioural science to support COVID-19 pandemic response. Nature Human Behavior, 4, 460–471. https://doi.org/10.1038/s41562-020-0884-z

Ober, T. M. (2020, August). Adapting your research methods in response to COVID-19: Perspective from a recent Ph.D. In P. Christidis (Chair), Adapting your research methods in response to COVID-19. Panel organized by the American Psychological Association Graduate Education Office , August 13, 2020. Recording of the presentation available online: https://www.apa.org/members/content/research-methods-covid-19

Rose, M. C., Lodhi, A., & Brooks, Patricia J. (2020, June 1-Sep 1). Does speaking improve comprehension of Turkish as a foreign language? A computer-assisted language learning study. [Poster presentation]. APS 2020 Poster Showcase, Online. https://doi.org/10.13140/RG.2.2.22011.13600

Teresa M. Ober, Ph.D., is a Postdoctoral Research Associate in the Department of Psychology at the University of Notre Dame working in the Learning Analytics and Measurement in Behavioral Sciences (LAMBS) Lab. Teresa completed a Ph.D. in Educational Psychology with a specialization in Learning, Development and Instruction and a certificate in Instructional Technology and Pedagogy from the Graduate Center CUNY in 2019. While a doctoral student, Teresa served as the Co-chair of the Graduate Student Teaching Association (2017-2018) and the Co-Chair to Communications of the Doctoral and Graduate Students’ Council (2017-2019). Teresa’s current work focuses on the science of learning, particularly the use of learning analytics, online and educational technologies to support learning, within the content areas of literacy development, and more recently STEM education.

Maya C. Rose, M.Phil., is a PhD Candidate in Educational Psychology at the Graduate Center, CUNY (advisor: Dr. Patricia Brooks). Her research examines how test variations (e.g., spoken language production) and language learning aptitude affect adult second language comprehension and neurolinguistic processing (via event-related potentials). She works as a graduate research assistant in the Language Learning Laboratory (PI: Dr. Patricia Brooks) and the Developmental Neurolinguistics Laboratory (PI: Dr. Valerie Shafer) where she works on ERP research examining neurolinguistic processing. For her dissertation, she is creating a computer assisted language learning platform to teach Turkish nominal morphology and vocabulary in order to pinpoint the most effective methods for second language learning. Other lines of research include quantitative approaches for analyzing reaction time data with Dr. Jay Verkuilen, systematic reviews of foreign language learning motivation in collaboration with Dr. Anna Schwartz and Dr. Anastasiya Lipnevich, and assessing the efficacy of cognitive game-based trainings. Maya is also a Social Media Fellow with the Digital Initiatives at the Graduate Center and an editor for the Graduate Student Teaching Association Blog.

Thank you for this helpful intervention, Maya and Teresa!

This was very helpful for my continuing to learn how to perform and interpret my research that’s switched online. Thank you both Maya and Teresa!